The NVIDIA Titan V Deep Learning Deep Dive: It's All About The Tensor Cores

by Nate Oh on July 3, 2018 10:15 AM ESTFinal Words

As we bring this deep dive to close, I should state that DL performance benchmarking on GPUs is very much an ongoing project. For one, everything we've discussed today has been limited to image classification and CNNs, which is very standard as far as GPU deep learning goes, and very standard in its insight. It also plays to some of the traditional DL strengths of GPUs – and of tensor cores and library support for that matter – and in the end, the only featured end-to-end tests were the CIFAR10 implementations.

What we didn't cover was the plethora of failed benchmark runs from various other tests and suites. It proved impossible to find, in piecemeal or in whole, an up-to-date DL suite that ran modern workloads, provided end-to-end metrics, covered multiple ML domains, included tensor core and mixed precision support, and could be reasonably accessible and used by a non-developer.

Because tensor cores are such an important part of the Titan V, it is only natural that we utilize them in our tests. And as AnandTech is not a ML research organization, developing our own robust benchmarkable model in a given framework was not an option. Similarly, we are limited in directly modifying tests and models ourselves. Reworking scripts is one thing, but once you have to go peeling through random Python code of both the models and various supporting functions for whatever framework, the task becomes a little out of scope.

Meanwhile, DAWNBench and competition-type collections were ultimately designed for researchers and developers to create their own implementations, rather than providing a generalizable benchmark. Reference implementations as maintained by the DL frameworks still would need modification to show that it could be a valid benchmark. And if it isn't obvious already, re-configuring a model for Volta-compatible mixed precision is not something that can (usually) be done on the fly.

As a whole, this actually bears relevance to the Titan V. Tensor cores and mixed precision require not just explicit support: they need development. And while the performance boost is clearly evident in particular cases, it's not there in all cases. Even when you have code and datasets that mesh well with the tensor core execution model, at the end of the day neural network processing isn't bottlenecked solely by ALUs. So while the Titan V is capable of being much more powerful than past NVIDIA GPU designs – sometimes massively so – the kinds of performance gains that NVIDIA likes to promote are understandably some of the best-case results.

What this means for anyone thinking about purchasing the Titan V for compute needs is that investing a Titan V means investing in mixed precision DL models and/or WMMA-based GEMM acceleration for HPC (with the assistance of CUTLASS and such). Titan cards have always been prosumer, but the Titan V really makes its mark as a professional consumer card.

Meanwhile in the context of cuDNN/cuBLAS, as well as early mixed precision with DP4A and FP16x2, tensor cores are a natural evolution in attempting to hit a bullseye with programmable hardware for DL acceleration. Of which release notes of recent cuDNN versions list various issues with tensor core math and operations, so bugfixing is very much an ongoing process. Yet tensor cores are even more limited than 'deep learning matrix-matrix multiplications,' except all the details and implementations are not relevant to end-users.

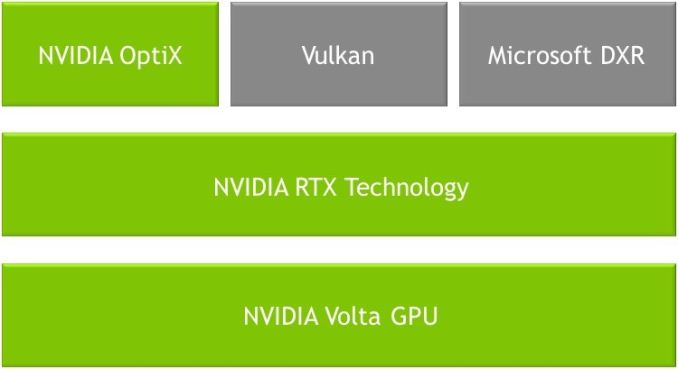

But what about mainstream consumers? What does all of this tensor core development mean for them? Despite the focus here on neural networking, the tensor cores are expected to have some relevance and use in the consumer graphics space. We know that NVIDIA's recent-ish RTX ray tracing technology uses tensor cores to apply denoising on images to make up for limited number of rays. But at the same time, that type of a use case for tensor cores implicates a very different product than the Titan V. RTX hardware functionality will be opaque to end-users, much like a supposed near-future where FP16x2/Rapid Packed Math would be used in video games. And development of tensor core utilization needs to reach that point.

One other consumer-focused role that NVIDIA will be considering for any kind of mainstream parts is whether they want to include tensor cores for development debugging purposes. This is a similar route that NVIDIA already takes today with FP64 functionality, including a handful of FP64 CUDA cores in each GPU so that developers can run FP64 code natively, just very slowly. Right now the Titan V is the only game in town if you need to debug your tensor code on real hardware, but that can certainly (and arguably is likely to) change once mainstream GPUs get a chance to play catch-up.

Meanwhile, NVIDIA's traditional hardware development cadence and redacted Hot Chips talks point to a new consumer lineup in the near future, and it will be very interesting to see what architectural features turn up. What we can say is that the Titan V definitely has the marks of NVIDIA's future GPGPU aspirations. And the Titan V is not merely a deep learning accelerator, because the tensor cores are in essence not merely deep learning accelerators. Moving forward, we're hoping that MLPerf and similar efforts make good headway, so that we can tease out a bit more secrets from GPUs.

65 Comments

View All Comments

SirCanealot - Tuesday, July 3, 2018 - link

No overclocking benchmarks. WAT. ¬_¬ (/s)Thanks for the awesome, interesting write up as usual!

Chaitanya - Tuesday, July 3, 2018 - link

This is more of an enterprise product for consumers so even if overclocking it enabled its something that targeted demographic is not going to use.Samus - Tuesday, July 3, 2018 - link

woooooooshMrSpadge - Tuesday, July 3, 2018 - link

He even put the "end sarcasm" tag (/s) to point out this was a joke.Ticotoo - Tuesday, July 3, 2018 - link

Where oh where are the MacOS drivers? It took 6 months to get the pascal Titan drivers.Hopefully soon

cwolf78 - Tuesday, July 3, 2018 - link

Nobody cares? I wouldn't be surprised if support gets dropped at some point. MacOS isn't exactly going anywhere.eek2121 - Tuesday, July 3, 2018 - link

Quite a few developers and professionals use Macs. Also college students. By manufacturer market share Apple probably has the biggest share, if not then definitely in the top 5.mode_13h - Tuesday, July 3, 2018 - link

I doubt it. Linux rules the cloud, and that's where all the real horsepower is at. Lately, anyone serious about deep learning is using Nvidia on Linux. It's only 2nd-teir players, like AMD and Intel, who really stand anything to gain by supporting niche platforms like Macs and maybe even Windows/Azure.Once upon a time, Apple actually made a rackmount OS X server. I think that line has long since died off.

Freakie - Wednesday, July 4, 2018 - link

Lol, those developers and professionals use their Macs to remote in to their compute servers, not to do any of the number crunching with.The idea of using a personal computer for anything except writing and debugging code is next to unheard of in an environment that requires the kind of power that these GPUs are meant to output. The machine they use for the actual computations are 99.5% of the time, a dedicated server used for nothing but to complete heavy compute tasks, usually with no graphical interface, just straight command-line.

philehidiot - Wednesday, July 4, 2018 - link

If it's just a command line why bother with a GPU like this? Surely integrated graphics would do?(Even though this is a joke, I'm not sure I can bear the humiliation of pressing "submit")